Well, despite all the arguments in the blogosphere about what process node palladium’s silicon is, and whether the design team is competent, and why it reports into sales…Cadence has announced their latest big revision of Palladium. Someone seems to be able to get things done. Of course it is bigger and faster and just as we used to have FPGA wars as to how many “gates” you could fit in a given product, we have wars about exactly what capacity and performance mean in emulation. However, emulation is a treadmill, Cadence announced today and so their product is probably the biggest. Mentor’s Veloce was last revved in April last year. I’m not sure about Synopsys (aka Eve aka Zebu). But for sure they will both be revved again and probably be the capacity leader for a time. And for sure their marketing will claim the crown by whatever they choose to use as a measure.

Well, despite all the arguments in the blogosphere about what process node palladium’s silicon is, and whether the design team is competent, and why it reports into sales…Cadence has announced their latest big revision of Palladium. Someone seems to be able to get things done. Of course it is bigger and faster and just as we used to have FPGA wars as to how many “gates” you could fit in a given product, we have wars about exactly what capacity and performance mean in emulation. However, emulation is a treadmill, Cadence announced today and so their product is probably the biggest. Mentor’s Veloce was last revved in April last year. I’m not sure about Synopsys (aka Eve aka Zebu). But for sure they will both be revved again and probably be the capacity leader for a time. And for sure their marketing will claim the crown by whatever they choose to use as a measure.

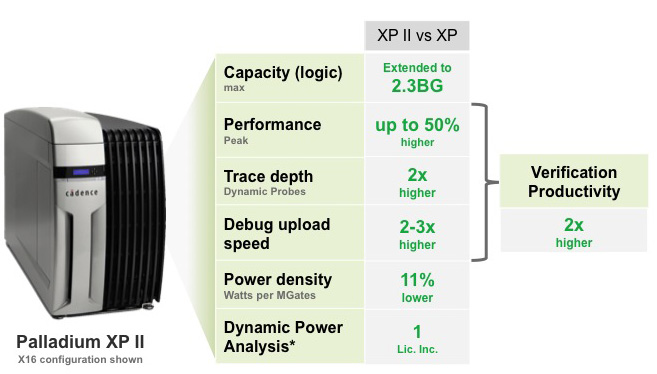

Cadence reckons that they have boosted overall verification productivity by 2X coming from:

- extending capacity to 2.3BG

- performance up to 50% higher than XP

- trace depth 2X higher

- debug upload speed 2-3X higher

- power density (of the emulator itself) 11% lower

- dynamic power analysis (of the design being emulated)

I met with Frank Schirrmeister who, like me, spent a lot of time trying to sell virtual platform technology (him at Axys and Virtio, more recently at Synopsys and Cadence; me at VaST and Virtutech). The problem was always the models. They were expensive to produce for standard parts and they were not the golden representation for custom blocks and so little differences from the RTL would creep in. Now that emulation has gone mainstream it seems that it is the way you get the models for the non-processors: just compile the actual RTL.

This is all really important. I won’t bother to put in charts here because you’ve all seen them. Just like we used to have “design gap” graphs in every presentation, everything to do with SoC designs shows the exploding cost of verification and the increasing cost of doing software development as the software component of a system has grown to be so large. With some graphs showing that the software component will grow to $250M it is unclear how anyone designs a chip. But software lasts much longer than any given chip. While I can believe that Apple or Google has spent that kind of money on iOS and Android, that software runs on a whole range of different chips over a period of many years. Indeed, that is one aspect of modern chip design: when you start to design the chip, a lot of the software load to run on it already exists so you’d better not break it.

Like those ubiquitous Oracle commercials that say “24 of the top 25 banks use Oracle”, Cadence reckon that 90% of the top 30 semiconductor companies use Palladium emulation (not necessarily exclusively, many companies source from multiple vendors and an emulator has to get really old before you throw it out); 90% of the top 10 smartphone application processors and 80% of the top 10 tablet application processors use it (are there really that many; after Qualcomm, Apple, Samsung and Mediatek everyone else must have negligible share), and the graphics chips of all the top game consoles (I think there are just 3 of those) were verified with Palladium.

Like those ubiquitous Oracle commercials that say “24 of the top 25 banks use Oracle”, Cadence reckon that 90% of the top 30 semiconductor companies use Palladium emulation (not necessarily exclusively, many companies source from multiple vendors and an emulator has to get really old before you throw it out); 90% of the top 10 smartphone application processors and 80% of the top 10 tablet application processors use it (are there really that many; after Qualcomm, Apple, Samsung and Mediatek everyone else must have negligible share), and the graphics chips of all the top game consoles (I think there are just 3 of those) were verified with Palladium.

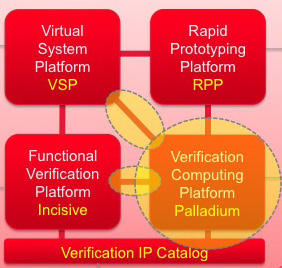

But the more interesting story, I think, is the gradual creation of Verification 2.0 which ties together virtual platforms, emulation, FPGA based verification (Cadence’s is called RPP) and RTL simulation. As part of the new Palladium, Cadence have also upgraded the links between Palladium and Incisive RTL simulation and also between the Virtual System Platform (VSP) and Palladium, making it easier to run software and hardware together.

One of the tricks to running virtual platforms is that the processor models have to run a reasonable amount of code before synchronizing with the rest of the system (otherwise the whole system runs like molasses, under the hood this is JIT compilation after all). How often this needs to be done depends critically on what the software is doing and how deterministic the hardware is. Ethernet communications or cellphone packets don’t arrive on a precise clock-cycle of the processor, for example,the nearest few million will do. With the improved capabilities Frank reckons they have 60X speed up for embedded OS boot (very important to be able to boot an OS quickly whatever you intend to do once it is up, and since the system isn’t ‘up’ there isn’t a lot going on in the real world to synchronize with) and 10X for production and test software executing on top of the OS on top of the emulated hardware.

One of the key lead customers was nVidia. They reckon it improves the software validation cycle and also simplifies post-silicon bringup. And, based on my past experience, they are not an easy customer to please because their chips push the boundaries of every tool.

For Richard Goering’s blog on the announcement, on the Cadence website, go here.

Although test is not quite verification, this week it is ITC in the Disneyland Hotel. If you are going, Cadence have an evening reception nearby on Wednesday 11th at House of Blues (where the Denali party was last time DAC was in Annaheim, I think before Cadence acquired them). For details of all Cadence presentations at ITC and to register for the evening reception go here.

Although test is not quite verification, this week it is ITC in the Disneyland Hotel. If you are going, Cadence have an evening reception nearby on Wednesday 11th at House of Blues (where the Denali party was last time DAC was in Annaheim, I think before Cadence acquired them). For details of all Cadence presentations at ITC and to register for the evening reception go here.

Comments

There are no comments yet.

You must register or log in to view/post comments.