Today Cadence announced Tempus, their new timing signoff solution. This has been in development for at least a couple of years and has been built from the ground up to be massively parallelized. Not just that different corners can be run in parallel (which is basically straightforward) but that large designs can be partitioned across multiple servers too. So Tempus is scalable to 100s of cores/servers. This scalability also means that it can handle essentially any size of design, and has already been used to analyze 100s of millions of cells (placeable instances) flat.

Today Cadence announced Tempus, their new timing signoff solution. This has been in development for at least a couple of years and has been built from the ground up to be massively parallelized. Not just that different corners can be run in parallel (which is basically straightforward) but that large designs can be partitioned across multiple servers too. So Tempus is scalable to 100s of cores/servers. This scalability also means that it can handle essentially any size of design, and has already been used to analyze 100s of millions of cells (placeable instances) flat.

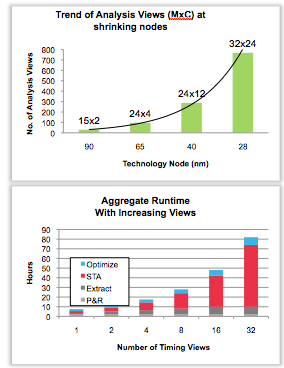

Of course timing closure is getting more difficult. Designs are getting larger and increased margins (e.g non-self-aligned double patterning at 20nm) make timing closure harder. Plus the number of views that need to be analyzed is also increasing exponentially leading to extremely long run times (days, not hours).

Of course timing closure is getting more difficult. Designs are getting larger and increased margins (e.g non-self-aligned double patterning at 20nm) make timing closure harder. Plus the number of views that need to be analyzed is also increasing exponentially leading to extremely long run times (days, not hours).

Timing closure at 20nm is growing to up to 40% of the design cycle. I’m assuming that design cycle here means the synthesis, place and route, signoff cycle and doesn’t include the RTL development and verification. As you know, modern SoC design teams are largely assembling blocks of IP that have either been purchased or developed internally in special IP development groups. In fact one o the problems with timing closure is that problems with the IP with regards to routability (which shows up often as timing violations) only comes to light suring SoC assembly.

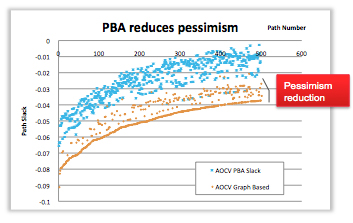

The order of magnitude increase in performance means that it is possible to do large amounts of path-based analysis (PBA). PBA traces timing through the actual input/output pins and propagates the correct slew values. Traditionally this has been too expensive to do extensively and a more pessimistic approach is used, taking the worst (slowest) input pin and using that as a proxy for all input pins. The difference is around 2-3% in reduced pessimism. This has two effects. Firstly, some paths that violate timing without PBA will be OK with PBA and so do not need to be addressed during timing closure. And paths that still do not make timing will miss with smaller negative slack and so will not require such aggressive changes to fix. The added margin can also be taken in the form of slower/smaller/lower-power cells.

The order of magnitude increase in performance means that it is possible to do large amounts of path-based analysis (PBA). PBA traces timing through the actual input/output pins and propagates the correct slew values. Traditionally this has been too expensive to do extensively and a more pessimistic approach is used, taking the worst (slowest) input pin and using that as a proxy for all input pins. The difference is around 2-3% in reduced pessimism. This has two effects. Firstly, some paths that violate timing without PBA will be OK with PBA and so do not need to be addressed during timing closure. And paths that still do not make timing will miss with smaller negative slack and so will not require such aggressive changes to fix. The added margin can also be taken in the form of slower/smaller/lower-power cells.

Another interesting feature is the ability to do hierarchical/incremental analysis. This makes it possible to look at just a single block (if that is all that your team is focused on) but have the timing numbers match precisely as if the entire chip was being timed. So a hierarchical design can be handled within an accurate global context.

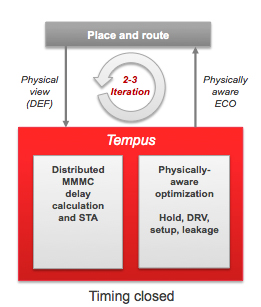

Tempus has a tight iteration cycle with place and route, producing physically aware optimization (legalized, DRC-clean placement directives) so that typically 2-3 iterations are enough to achieve timing closure, as opposed to the all-too-common case where each set of changes fixed causes another set of paths that were previously OK to suddenly need addressing, the dreaded whack-a-mole situation where timing doesn’t really close.

Tempus has a tight iteration cycle with place and route, producing physically aware optimization (legalized, DRC-clean placement directives) so that typically 2-3 iterations are enough to achieve timing closure, as opposed to the all-too-common case where each set of changes fixed causes another set of paths that were previously OK to suddenly need addressing, the dreaded whack-a-mole situation where timing doesn’t really close.

Cadence have been working with Texas Instruments as a lead customer. One design (which I assume is a TI design but may not be):

- 28nm, 44M instances, 12 views

- Existing flow: 10 days to fix hold violations, could only work on 7 views due to capacity limitations

- Tempus: 99.5% hold violations fixed in one ECO iteration with no degradation in setup timing. Before using Tempus there were 11,085 timing violations and after there were just 54

Cadence are working with the foundries to get Tempus signed off as signoff. For smaller fabless companies that cannot afford to do their own correlation this is essential, of course, to even be considered.

The big challenge for Cadence that I see is that it is very hard to replace “standard” trusted tools that are good enough. That’s great if you are Cadence with Virtuoso, or Mentor with Calibre. And of course Synopsys with PrimeTime. It is what I call predictable pain. PrimeTime may not be the fastest or highest capacity but it does work. Cadence is betting that if they really can deliver a 10X improvement in design closure productivity then that will be enough to get people to switch. To find out we will just have to…well…give it time.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.