During DVCon I met with Steve Bailey to get an update on Mentor’s verification. They were also announcing some new capabilities. I also attended Wally Rhines keynote (primarily about verification of course, since this was DVCon; I blogged about that here) and the Mentor lunch (it was pretty much Mentor all day for me) on the verification survey that they had recently completed.

During DVCon I met with Steve Bailey to get an update on Mentor’s verification. They were also announcing some new capabilities. I also attended Wally Rhines keynote (primarily about verification of course, since this was DVCon; I blogged about that here) and the Mentor lunch (it was pretty much Mentor all day for me) on the verification survey that they had recently completed.

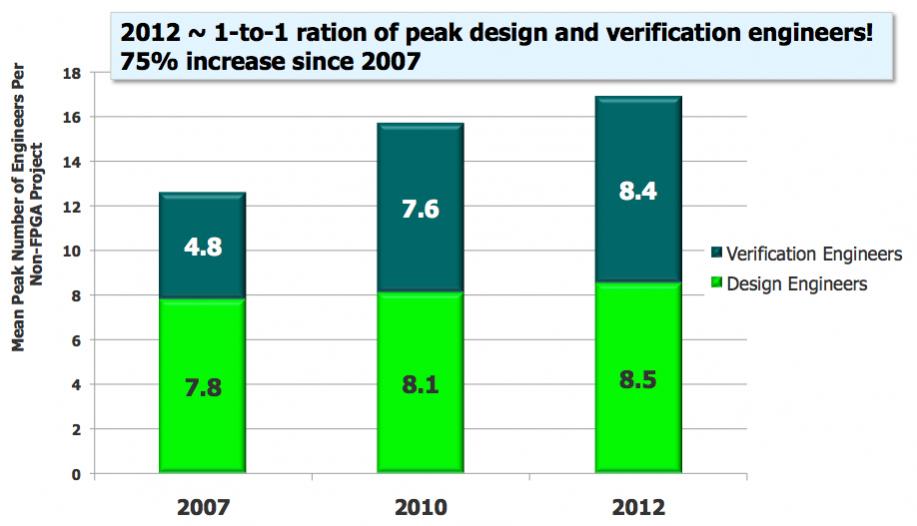

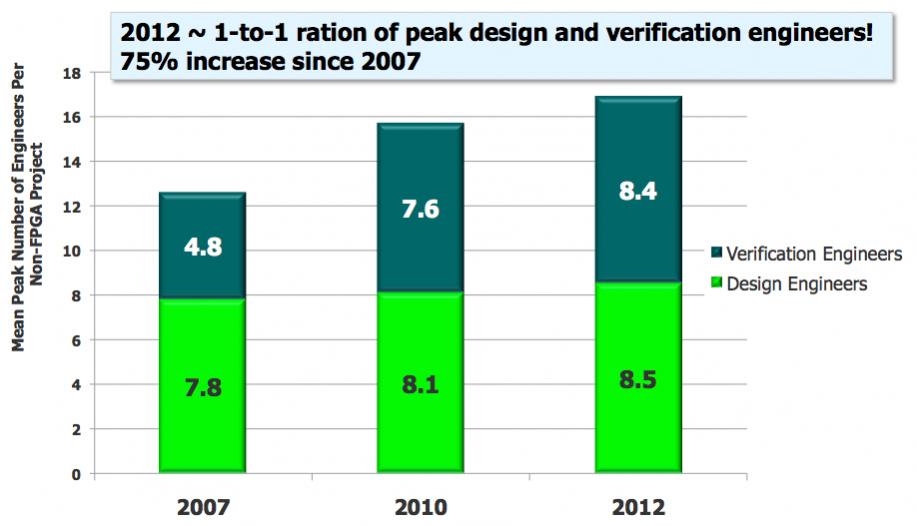

Verification has changed a lot over the past few years. The techniques that were only used by the most advanced groups doing the most advanced designs have become mainstream. Of course this has been driven out of necessity, as verification has expanded to take up more and more of the schedule. This is evident in the 75% increase in the number of verification engineers on a project since 2007, compared to the minor increase in the number of design engineers.

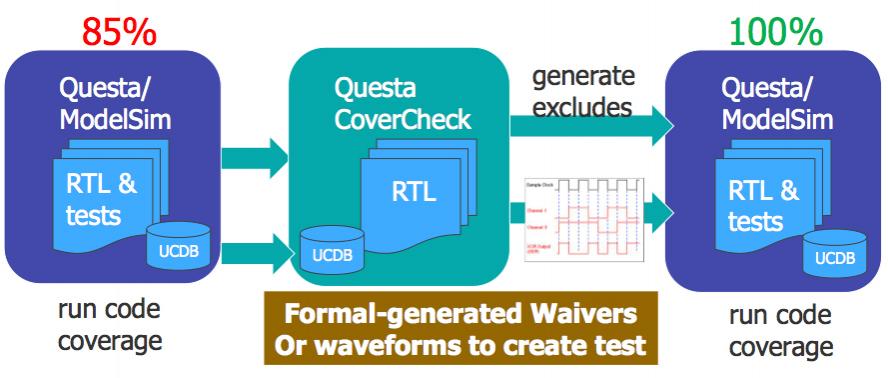

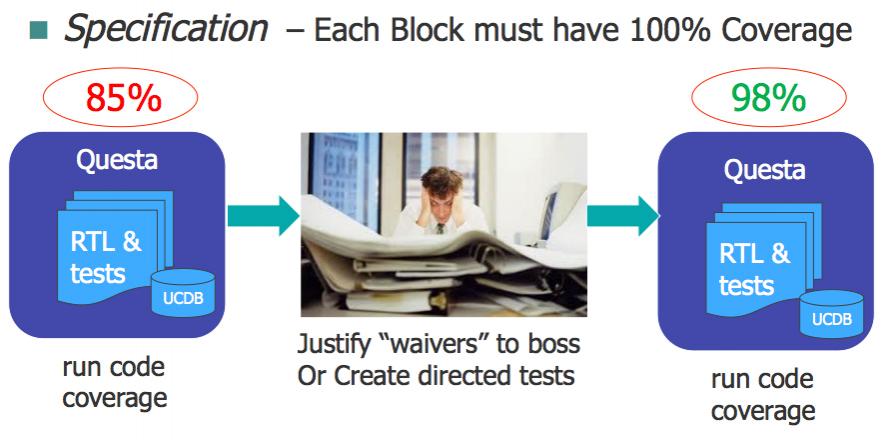

The specification for many designs is that each block must have 100% coverage, or waivers are required. Generating and justifying waivers to “prove” that certain code is unreachable and so does not need to be covered is very time consuming. NVidia estimated that they took 9 man-years on code-coverage on a recent project. So one new development is Questa CoverCheck that automates coverage closure. Formally generated waivers for unreachable code reduce the effort to write manual tests and also eliminates the tedious manual analysis to justify waivers to management.

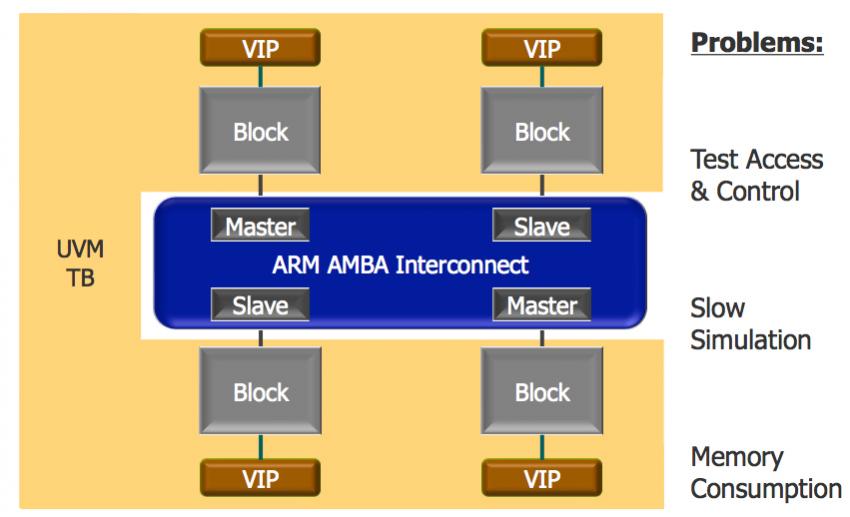

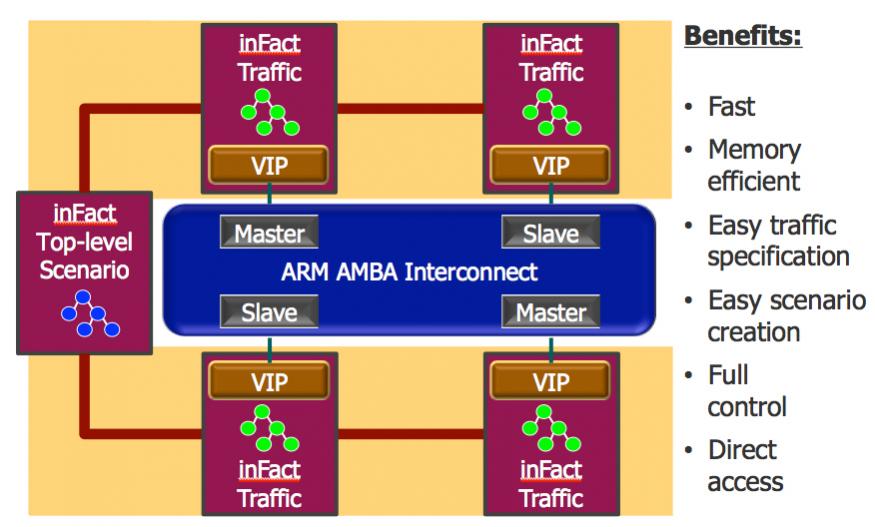

Another new capability is in the area of interconnect verification. Trying to set up all the blocks on a modern SoC so that they generate the required traffic on the interconnect is very time-consuming to do by hand. The simulation is also large, requires a lot of memory and runs slowly. Instead, inFact can be used to generate the traffic more explicitly, replacing the actual blocks of the design with traffic generators that work much more directly.

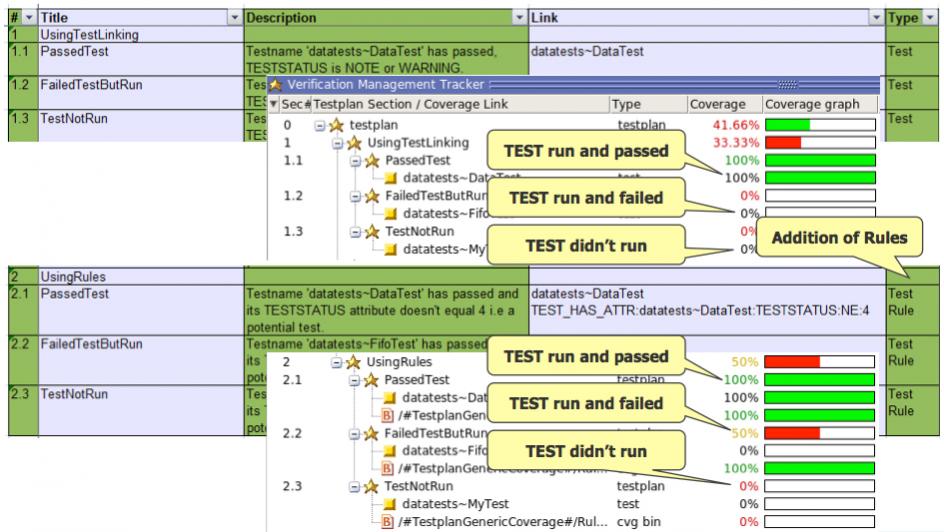

Mentor also has tools for rules-based verification that gives verification engineers and, especially, the project management insight into how far along verification really is. When this is done ad hoc it always seems that verification is nearly complete for most of the schedule. As the old joke goes, it takes 90% of the time to do the first 90% of the design, and then the second 90% of the time to do the remaining 10%. By switching to rules-based verification the visibility is both improved and made more accurate.

Comments

There are no comments yet.

You must register or log in to view/post comments.