Tech aficionados love roadmaps. The confidence a roadmap instills – whether using tangible evidence or just a good story – can be priceless. Decisions on “the next big thing”, sometimes years and a lot of uncertain advancements away, hinge on the ability of a technology marketing team to define and communicate a roadmap.

Any roadmap has three fundamental pieces: reality, probability, and fantasy. The first two, taken together, are critical to success. A good reality is better, but even a relatively dismal current product situation can be overcome, if there is some credibility left, on the strength of the probability story in the middle. (I actually created and told a crappy reality but good probability roadmap story once this way: “We took a vacation. We’re back, and here’s what we’re doing based on what we heard customers say they wanted.” It was true; we were a new marketing team with experience, and we spent a lot of time with hundreds of customers on the listening part to get the next thing we said right.) Companies that fail to execute on the probability story – the absolute must-have for customers that have bought in – risk losing credibility fast.

If both the reality and probability stories and execution hold up, attention turns to the fantasy portion. A fantasy has a lot of components: difficult enough to be interesting, achievable enough to look believable, and dramatic enough to get people excited. The fantasy part of the roadmap evolves: if successful, it becomes the probability portion, with more definition and firmer timeframes, and it gets triumphantly replaced by a new and improved fantasy. If not successful, it gets replaced anyway with a different, hopefully improved vision.

We are seeing one of the bigger roadmap marketing efforts of our time right now, weaving a story around the progression from 28nm, to 22/20nm, to 14nm and beyond.

We know 28nm processes are relatively solid now, having endured most of the transition woes in getting any process technology to volumes. We’ve been able to get a fairly good estimate of the limits of the technology, as measured by a 3GHz ARM Cortex-A9 as the consensus of the fastest core we’ll see in 28nm. Foundries are churning out parts, more and more IP is showing up, and things are relatively well.

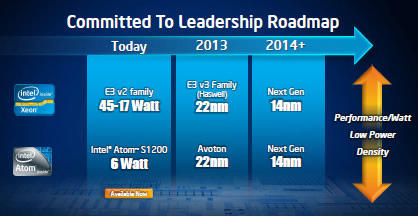

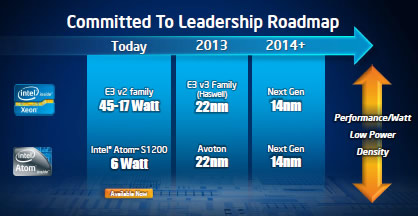

At the other end, the industry went giddy when Altera and Intel recently announced they will work together on 14nm. There is some basis in their earlier cooperation on “Stellarton”, a primitive attempt at an Atom core and some FPGA gates in a single package. The most definite thing in this new announcement is Intel is looking to have a 14nm process up “sometime in 2014”, which is usually code for December 32[SUP]nd[/SUP], with some slack. In a best case scenario, we’d probably see an Altera part – sampled, count them, there’s one – about two years from right now.

Difficult? Yep. Billion dollar fab, new FinFETs, big chips. Achievable? Sure. If there is any way to prove out a new process, it is with memory or programmable logic, mostly uniform structures that can be replicated easily. Dramatic? A mix of people saying Altera is now ahead, Xilinx is suddenly behind, and Intel is completely transforming themselves into a leading foundry. Wow. We’ll leave the discussion on high-end FPGA volumes for another time.

What we should be discussing more is the probability story, and that lies in the area of 20nm. It is what people are actually facing in projects now, and there are some changes from the 28nm practices that are extremely important to understand. Cadence has released a new white paper “A Call to Action: How 20nm Will Change IC Design” discussing some of these ideas.

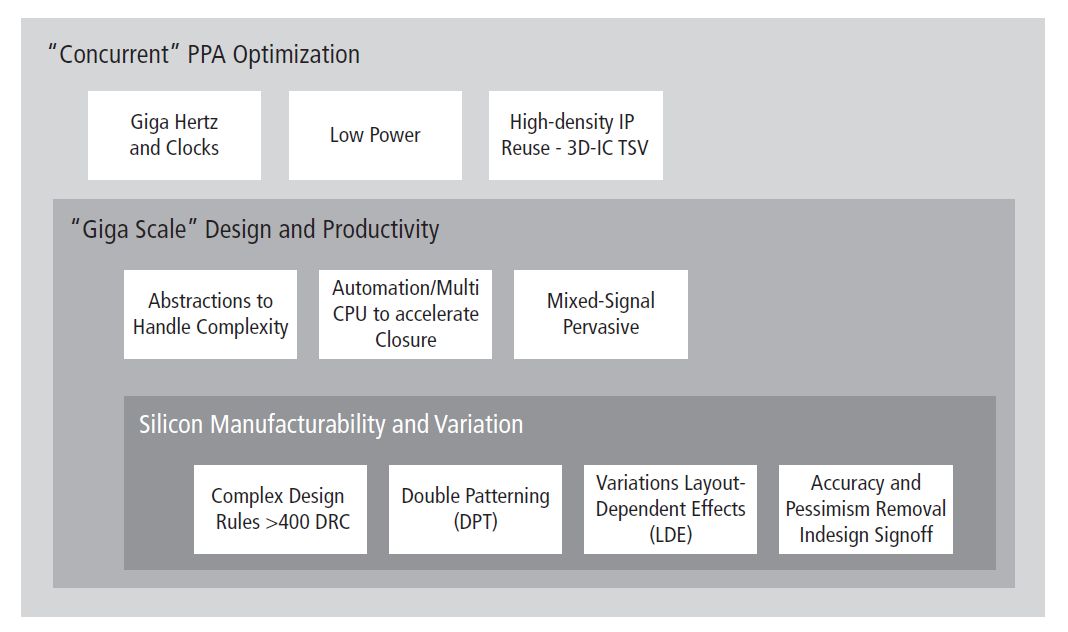

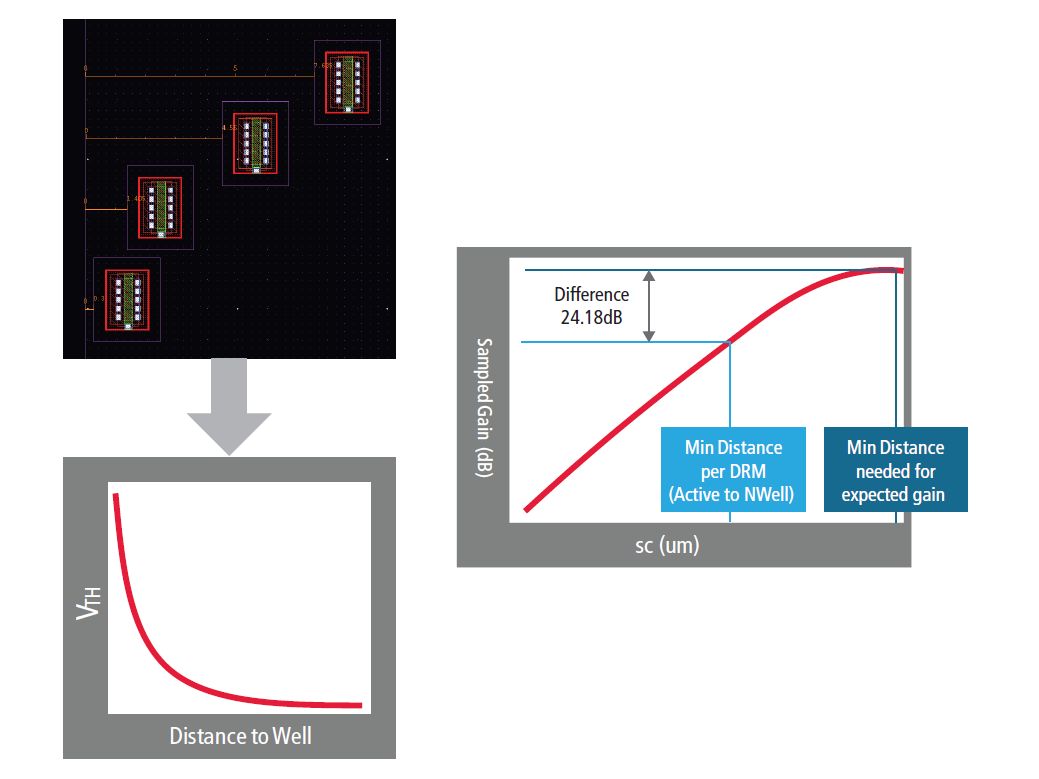

Among the changes Cadence identifies, the first is obvious: double patterning, with a good discussion of what to do about it. Another area of concern is the increasing amount of mixed-signal integration, something designers have tended to avoid. That factors into the third area, layout-dependent effects and better design rule checking. An interesting quote:

At 20nm up to 30% of device performance can be attributed to the layout “context,” that is, the neighborhood in which a device is placed.

The final discussion is on better modeling and concurrent PPA optimization, dealing with the disparities in IP blocks from many sources – 85 blocks in a typical SoC today, and growing – in the clock and power domains. This is a key part of Cadence’s approach to 28nm, and becomes even more important at 20nm and beyond.

Dealing with the probabilities will tell us more than any press release on what might be “the next big thing.” If you’re looking at what you’ll face in moving to 20nm, the Cadence white paper is a good introduction. What other design issues are you seeing in the transition from 28nm to 20nm? Am I too pessimistic on the 14nm story, or just realistic that there are a lot of difficult things to solve between here and there? Thoughts welcome.

Share this post via:

Comments

0 Replies to “When the lines on the roadmap get closer together”

You must register or log in to view/post comments.